In the fast-evolving world of deep learning, two frameworks dominate the landscape: TensorFlow and PyTorch. Whether you’re a researcher building cutting-edge models or an engineer deploying AI at scale, choosing the right framework can shape your development experience and outcomes.

Let’s break down the key differences, strengths, and ideal use cases for each.

Core Differences at a Glance

| Feature | TensorFlow | PyTorch |

|---|---|---|

| Developer | Facebook (Meta) | |

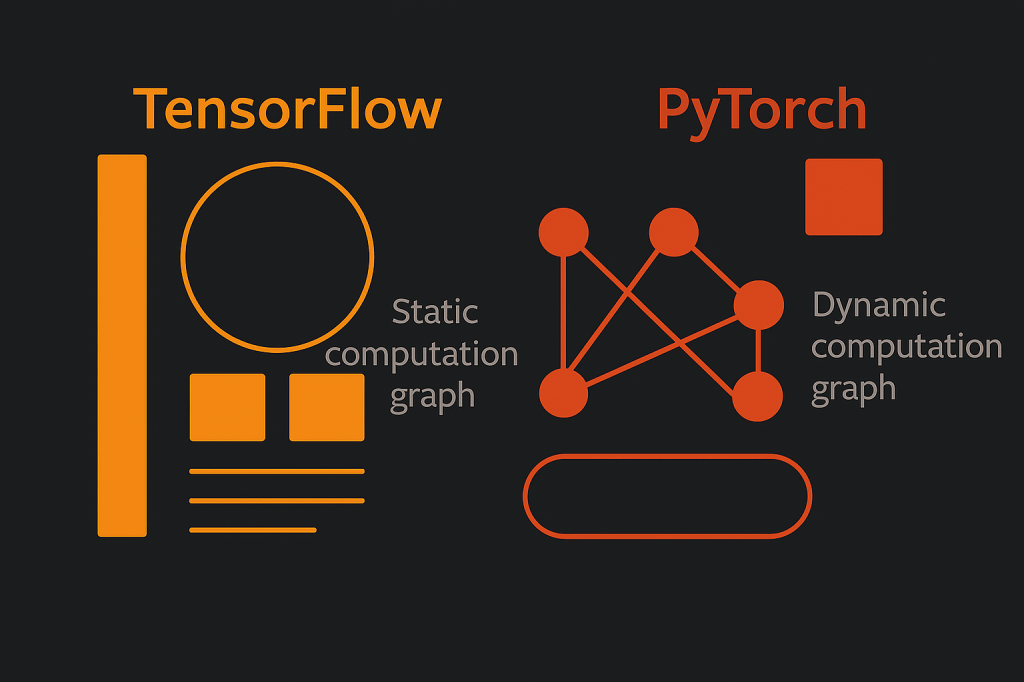

| Computation Graph | Static (define-then-run) | Dynamic (define-by-run) |

| Ease of Use | Steeper learning curve | More intuitive and Pythonic |

| Deployment Tools | TensorFlow Lite, TensorFlow.js, TFX | TorchScript, ONNX (less mature) |

| Visualization | Built-in TensorBoard | Requires third-party tools |

| Ecosystem | Rich (Keras, TFX, Model Garden) | Leaner but growing (TorchServe, PyTorch Lightning) |

| Research Adoption | Historically strong in production | Widely preferred in academia and research |

| Performance Tuning | Excellent for distributed training | Great for experimentation and debugging |

| Community Support | Large, mature ecosystem | Rapidly growing, especially in AI research |

When to Use What?

Choose PyTorch if:

- You’re focused on research or rapid prototyping

- You prefer clean, Pythonic code

- You need dynamic computation graphs (e.g., variable input sizes or conditional logic)

Choose TensorFlow if:

- You’re building production-grade systems

- You need cross-platform deployment (mobile, web, embedded)

- You want robust tooling for monitoring, scaling, and serving

Real-World Adoption

- TensorFlow powers production systems at companies like Google, Uber, and Airbnb. Its mature ecosystem and deployment tools make it ideal for enterprise-scale applications.

- PyTorch is the framework of choice for OpenAI’s GPT models, Tesla’s Autopilot, and many academic labs. Its flexibility and ease of use make it a favorite for innovation and experimentation.

Final Thoughts

Both TensorFlow and PyTorch are powerful, actively developed, and widely supported. Your choice should depend on your goals:

- For research and experimentation, PyTorch offers unmatched flexibility.

- For scalable deployment and production, TensorFlow provides a robust, end-to-end pipeline.

If you’re working with Azure ML or exploring ONNX for model interoperability, both frameworks offer integration paths—though PyTorch often feels more natural for development, while TensorFlow shines in deployment.

Still on the fence? I’ve been there too :) —sometimes the best way to decide is to roll up your sleeves and build something small in both frameworks. Whether it’s a sentiment analyzer or a mini-RAG pipeline, you’ll quickly get a feel for which one clicks with your thinking style. At the end of the day, the “best” framework is the one that lets you move fast, debug with ease, and build with confidence.

That’s my pet ‘Ruby’, and I am taking confidence lessons from her :)

Leave a comment